Summary

ACCESSPACE’s high-level goal was to allow VIP to navigate indoors or outdoors in autonomy, helping them intuitively perceive where they are, where they want to go, to choose how to get there, and avoid the incoming obstacles on their way.

ACCESSPACE had three main axes of research:

1) Devise an intuitive and efficient way to provide real-time spatial cues through tactile signals

2) Design a haptic interface that allows to communicate said spatial representation easily to the user

3) Implement the software require to support the various functions of this interface (e.g. Indoor Localisation, Obstacle Detection, Mapping, …)

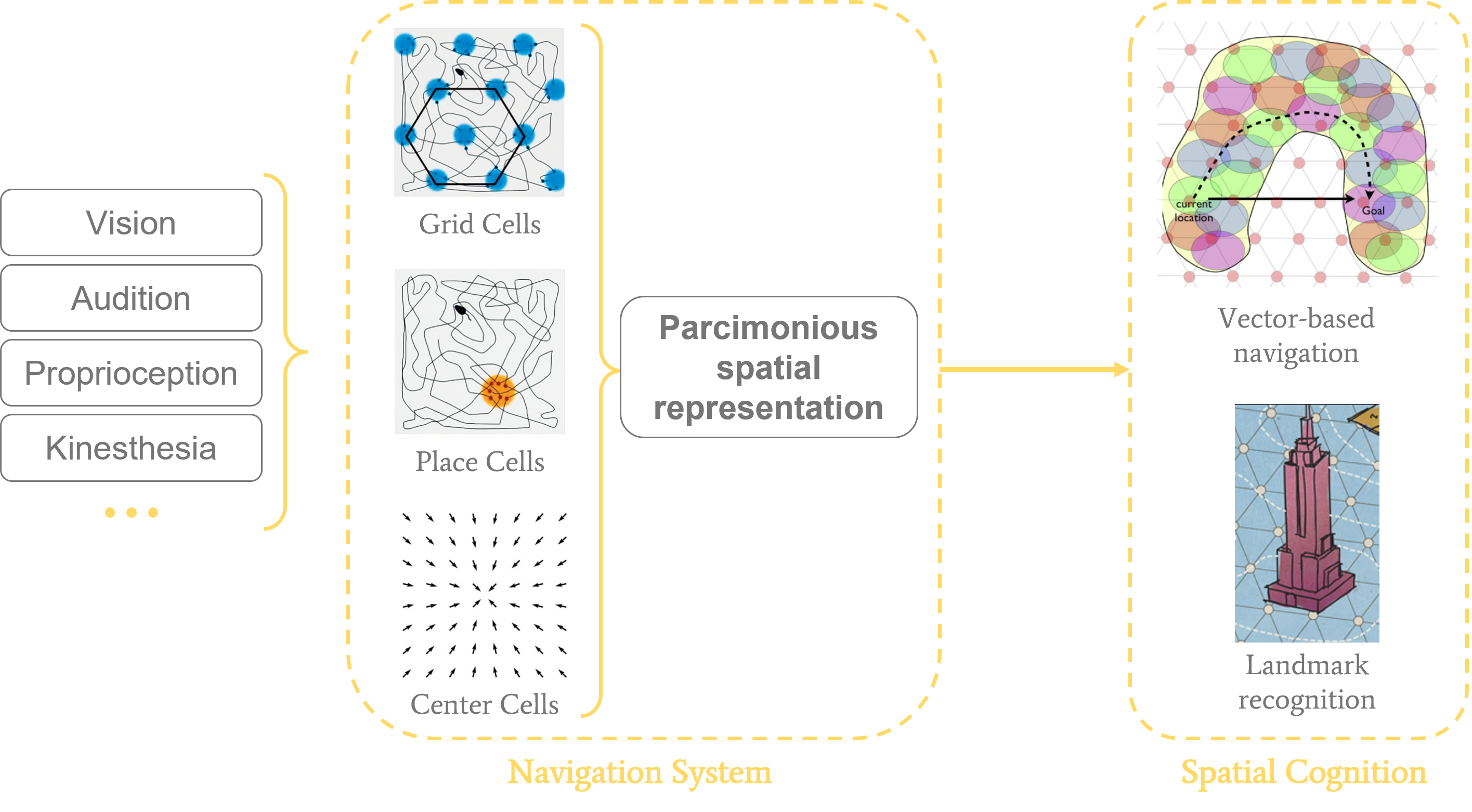

The guiding theoretical principle of this project was to use the brain’s navigation system as an inspiration source for what information to provide VIP to make navigation the most intuitive. The gist of the idea is that our (biological) navigation system combines and distills information from multiple senses into a set of key spatial properties (e.g. where the center of the current room is located). This spatial representation allows us to reason on the structure of our environment, and to navigate it efficiently. For VIP, this process is impaired due to our navigation system’s heavy reliance on visual information. To make our assistive device more intuitive, instead of substituing vision in its entirety, we focus on directly providing the information that our navigation system would extract from vision.

Thus, we devised an encoding scheme that provides the user with 3 types of information through egocentered tactile feedback:

1) The orientation and distance to the destination of the journey (as the crow flies)

2) The available path possibilities around the user (i.e. the various branching streets they could take, the current room’s center)

3) The closest obstacles

1) Managed the literature review for all axes of the project (spatial cognition, assistive devices, sensory substitution, computer vision, …).

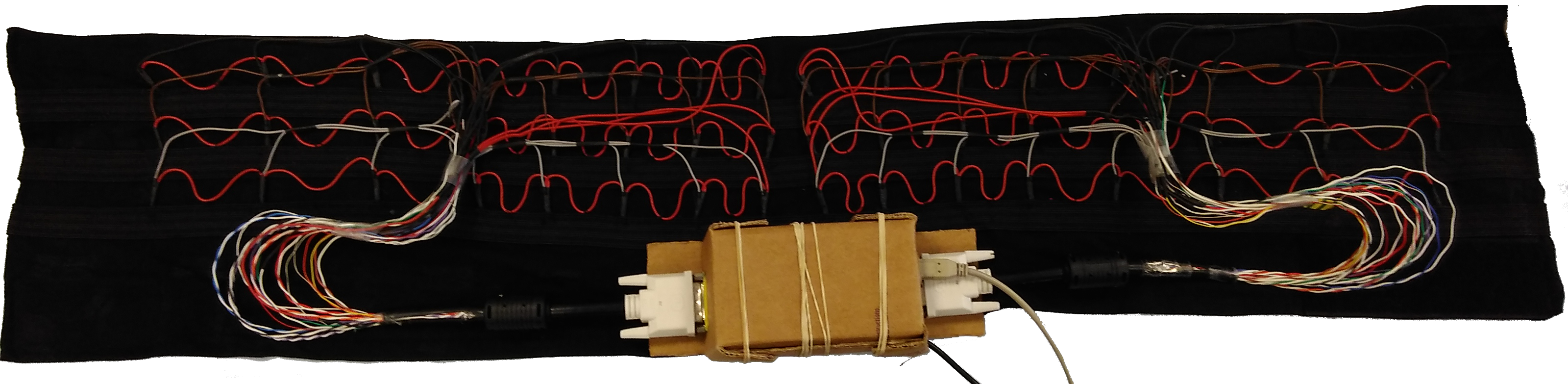

2) Designed the TactiBelt and participated in its conception (Arduino).

3) Participated in the development of a Java application to control and evaluate the TactiBelt in a pacman-like game.

4) Handled the preliminary experimental evaluations of the TactiBelt, and the analyses of the collected data.

5) Was involved in writing a journal paper (Faugloire et al., 2022) and a conference paper (Rivière et al., 2018)

6) Disseminated the project through a talk at an international conference, and various outreach events.

Details

Our interface: the TactiBelt

To provide the proposed egocentric encoding scheme to the user, we designed a vibro-tactile belt, the TactiBelt: it comprises of 46 ERM motors spread into three layers, controlled by an Arduino Mega, through a specialized software written in Java:

Software tools

To capture and extract the information we need from the VIP’s environment, we devised a series of software tools relying mostly on Computer Vision:

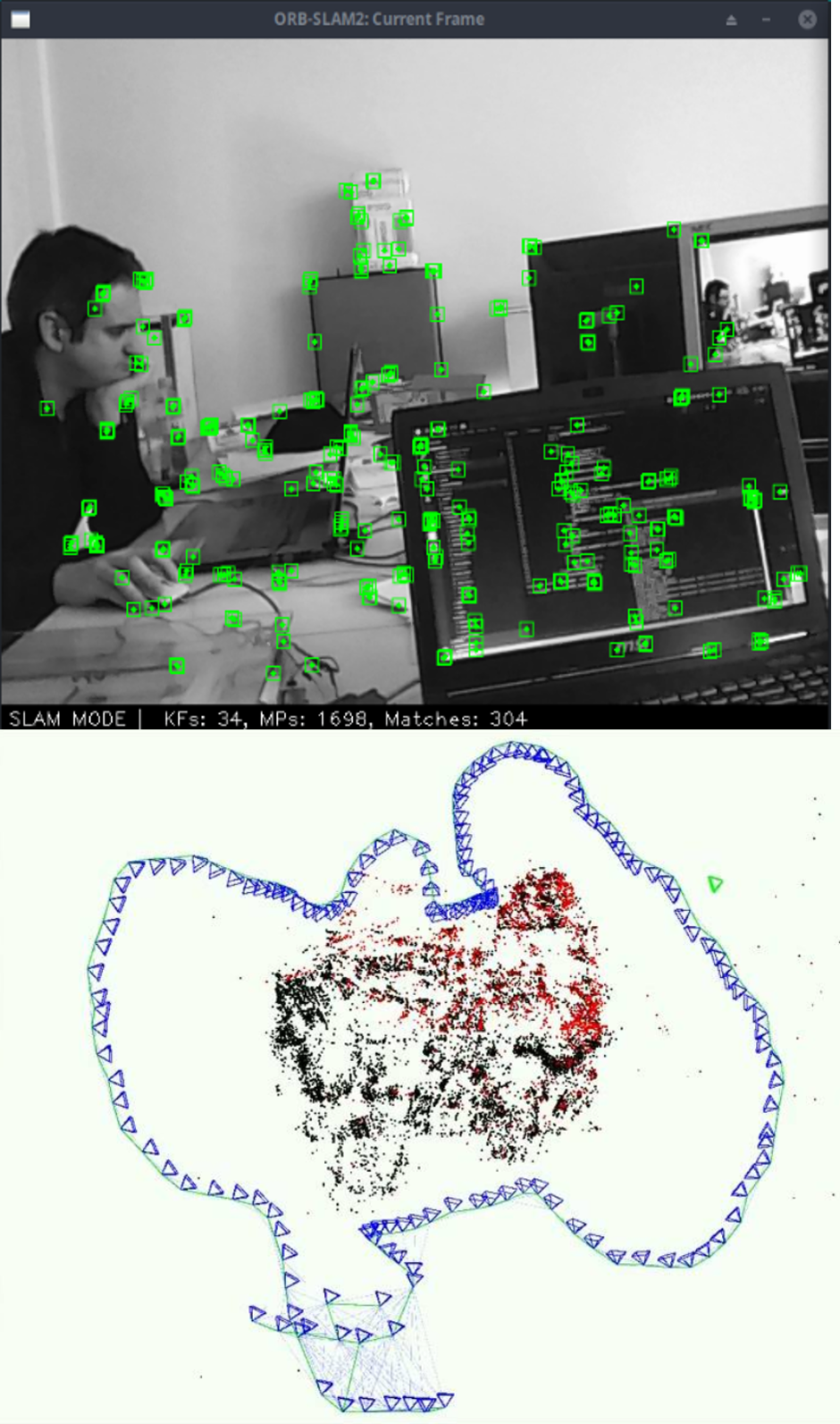

1) Obstacle detection and indoor localisation using the ORB-SLAM algorithm:

2) Depth estimation from a monocular RGB camera, using the MonoDepth algorithm:

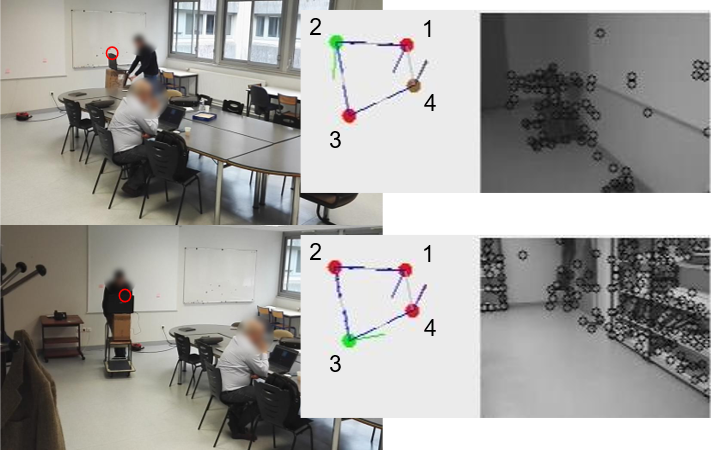

3) Generating a mobility graph of the environment during movement using Reinforcement Learning:

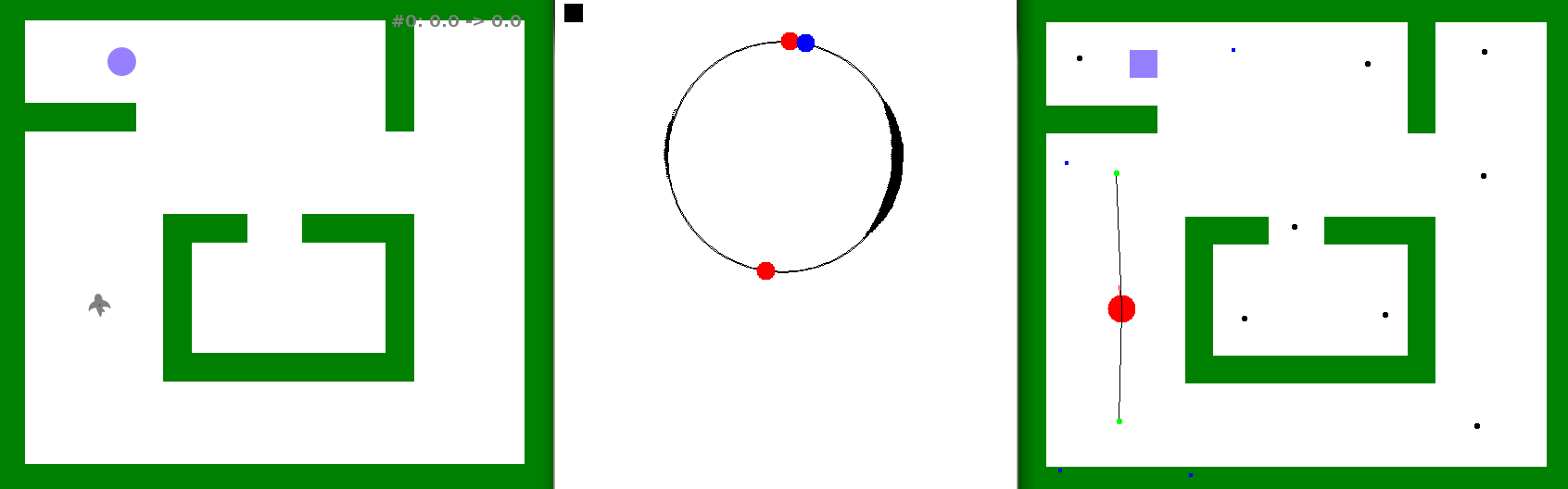

Applied to an artificial agent exploring a virtual maze, looking for food:

Applied to a real agent (human pushing a cart with a camera and the computer running the algorithm around a meeting table):

4) A virtual environment to test the TactiBelt and our candidate spatial encoding schemes:

Project dissemination

The ACCESSPACE project was promoted in various mainstream and technical media, such as:

- On RTL, a French national radio station (

- On PhDTalent, a platform and network for PhD Students who wish to transition to industry (

- On Guide Néret, a specialized website on Handicap in France (

- On Acuité, a specialized website dedicated to Opticians and news around visual impairment (

- On Oxytude, a weekly podcast reviewing news related to visual impairment (

- On FIRAH, the French Foundation on Applied Research for Handicap (